| Valgrind User Manual |

|---|

Table of Contents

This chapter describes the Valgrind core services, command-line options and behaviours. That means it is relevant regardless of what particular tool you are using. The information should be sufficient for you to make effective day-to-day use of Valgrind. Advanced topics related to the Valgrind core are described in Valgrind's core: advanced topics.

A point of terminology: most references to "Valgrind" in this chapter refer to the Valgrind core services.

Valgrind is designed to be as non-intrusive as possible. It works directly with existing executables. You don't need to recompile, relink, or otherwise modify the program to be checked.

You invoke Valgrind like this:

valgrind [valgrind-options] your-prog [your-prog-options]

The most important option is --tool which dictates

which Valgrind tool to run. For example, if want to run the command

ls -l using the memory-checking tool

Memcheck, issue this command:

valgrind --tool=memcheck ls -l

However, Memcheck is the default, so if you want to use it you can

omit the --tool option.

Regardless of which tool is in use, Valgrind takes control of your program before it starts. Debugging information is read from the executable and associated libraries, so that error messages and other outputs can be phrased in terms of source code locations, when appropriate.

Your program is then run on a synthetic CPU provided by the Valgrind core. As new code is executed for the first time, the core hands the code to the selected tool. The tool adds its own instrumentation code to this and hands the result back to the core, which coordinates the continued execution of this instrumented code.

The amount of instrumentation code added varies widely between tools. At one end of the scale, Memcheck adds code to check every memory access and every value computed, making it run 10-50 times slower than natively. At the other end of the spectrum, the minimal tool, called Nulgrind, adds no instrumentation at all and causes in total "only" about a 4 times slowdown.

Valgrind simulates every single instruction your program executes. Because of this, the active tool checks, or profiles, not only the code in your application but also in all supporting dynamically-linked libraries, including the C library, graphical libraries, and so on.

If you're using an error-detection tool, Valgrind may

detect errors in system libraries, for example the GNU C or X11

libraries, which you have to use. You might not be interested in these

errors, since you probably have no control over that code. Therefore,

Valgrind allows you to selectively suppress errors, by recording them in

a suppressions file which is read when Valgrind starts up. The build

mechanism selects default suppressions which give reasonable

behaviour for the OS and libraries detected on your machine.

To make it easier to write suppressions, you can use the

--gen-suppressions=yes option. This tells Valgrind to

print out a suppression for each reported error, which you can then

copy into a suppressions file.

Different error-checking tools report different kinds of errors. The suppression mechanism therefore allows you to say which tool or tool(s) each suppression applies to.

First off, consider whether it might be beneficial to recompile

your application and supporting libraries with debugging info enabled

(the -g option). Without debugging info, the best

Valgrind tools will be able to do is guess which function a particular

piece of code belongs to, which makes both error messages and profiling

output nearly useless. With -g, you'll get

messages which point directly to the relevant source code lines.

Another option you might like to consider, if you are working with

C++, is -fno-inline. That makes it easier to see the

function-call chain, which can help reduce confusion when navigating

around large C++ apps. For example, debugging

OpenOffice.org with Memcheck is a bit easier when using this option. You

don't have to do this, but doing so helps Valgrind produce more accurate

and less confusing error reports. Chances are you're set up like this

already, if you intended to debug your program with GNU GDB, or some

other debugger. Alternatively, the Valgrind option

--read-inline-info=yes instructs Valgrind to read

the debug information describing inlining information. With this,

function call chain will be properly shown, even when your application

is compiled with inlining.

If you are planning to use Memcheck: On rare

occasions, compiler optimisations (at -O2

and above, and sometimes -O1) have been

observed to generate code which fools Memcheck into wrongly reporting

uninitialised value errors, or missing uninitialised value errors. We have

looked in detail into fixing this, and unfortunately the result is that

doing so would give a further significant slowdown in what is already a slow

tool. So the best solution is to turn off optimisation altogether. Since

this often makes things unmanageably slow, a reasonable compromise is to use

-O. This gets you the majority of the

benefits of higher optimisation levels whilst keeping relatively small the

chances of false positives or false negatives from Memcheck. Also, you

should compile your code with -Wall because

it can identify some or all of the problems that Valgrind can miss at the

higher optimisation levels. (Using -Wall

is also a good idea in general.) All other tools (as far as we know) are

unaffected by optimisation level, and for profiling tools like Cachegrind it

is better to compile your program at its normal optimisation level.

Valgrind understands the DWARF2/3/4 formats used by GCC 3.1 and later. The reader for "stabs" debugging format (used by GCC versions prior to 3.1) has been disabled in Valgrind 3.9.0.

When you're ready to roll, run Valgrind as described above.

Note that you should run the real

(machine-code) executable here. If your application is started by, for

example, a shell or Perl script, you'll need to modify it to invoke

Valgrind on the real executables. Running such scripts directly under

Valgrind will result in you getting error reports pertaining to

/bin/sh,

/usr/bin/perl, or whatever interpreter

you're using. This may not be what you want and can be confusing. You

can force the issue by giving the option

--trace-children=yes, but confusion is still

likely.

Valgrind tools write a commentary, a stream of text, detailing error reports and other significant events. All lines in the commentary have following form:

==12345== some-message-from-Valgrind

The 12345 is the process ID.

This scheme makes it easy to distinguish program output from Valgrind

commentary, and also easy to differentiate commentaries from different

processes which have become merged together, for whatever reason.

By default, Valgrind tools write only essential messages to the

commentary, so as to avoid flooding you with information of secondary

importance. If you want more information about what is happening,

re-run, passing the -v option to Valgrind. A second

-v gives yet more detail.

You can direct the commentary to three different places:

The default: send it to a file descriptor, which is by default

2 (stderr). So, if you give the core no options, it will write

commentary to the standard error stream. If you want to send it to

some other file descriptor, for example number 9, you can specify

--log-fd=9.

This is the simplest and most common arrangement, but can cause problems when Valgrinding entire trees of processes which expect specific file descriptors, particularly stdin/stdout/stderr, to be available for their own use.

A less intrusive

option is to write the commentary to a file, which you specify by

--log-file=filename. There are special format

specifiers that can be used to use a process ID or an environment

variable name in the log file name. These are useful/necessary if your

program invokes multiple processes (especially for MPI programs).

See the basic options section

for more details.

The

least intrusive option is to send the commentary to a network

socket. The socket is specified as an IP address and port number

pair, like this: --log-socket=192.168.0.1:12345 if

you want to send the output to host IP 192.168.0.1 port 12345

(note: we

have no idea if 12345 is a port of pre-existing significance). You

can also omit the port number:

--log-socket=192.168.0.1, in which case a default

port of 1500 is used. This default is defined by the constant

VG_CLO_DEFAULT_LOGPORT in the

sources.

Note, unfortunately, that you have to use an IP address here, rather than a hostname.

Writing to a network socket is pointless if you don't

have something listening at the other end. We provide a simple

listener program,

valgrind-listener, which accepts

connections on the specified port and copies whatever it is sent to

stdout. Probably someone will tell us this is a horrible security

risk. It seems likely that people will write more sophisticated

listeners in the fullness of time.

valgrind-listener can accept

simultaneous connections from up to 50 Valgrinded processes. In front

of each line of output it prints the current number of active

connections in round brackets.

valgrind-listener accepts three

command-line options:

-e --exit-at-zeroWhen the number of connected processes falls back to zero, exit. Without this, it will run forever, that is, until you send it Control-C.

--max-connect=INTEGERBy default, the listener can connect to up to 50 processes.

Occasionally, that number is too small. Use this option to

provide a different limit. E.g.

--max-connect=100.

portnumberChanges the port it listens on from the default (1500).

The specified port must be in the range 1024 to 65535.

The same restriction applies to port numbers specified by a

--log-socket to Valgrind itself.

If a Valgrinded process fails to connect to a listener, for whatever reason (the listener isn't running, invalid or unreachable host or port, etc), Valgrind switches back to writing the commentary to stderr. The same goes for any process which loses an established connection to a listener. In other words, killing the listener doesn't kill the processes sending data to it.

Here is an important point about the relationship between the

commentary and profiling output from tools. The commentary contains a

mix of messages from the Valgrind core and the selected tool. If the

tool reports errors, it will report them to the commentary. However, if

the tool does profiling, the profile data will be written to a file of

some kind, depending on the tool, and independent of what

--log-* options are in force. The commentary is

intended to be a low-bandwidth, human-readable channel. Profiling data,

on the other hand, is usually voluminous and not meaningful without

further processing, which is why we have chosen this arrangement.

When an error-checking tool detects something bad happening in the program, an error message is written to the commentary. Here's an example from Memcheck:

==25832== Invalid read of size 4 ==25832== at 0x8048724: BandMatrix::ReSize(int, int, int) (bogon.cpp:45) ==25832== by 0x80487AF: main (bogon.cpp:66) ==25832== Address 0xBFFFF74C is not stack'd, malloc'd or free'd

This message says that the program did an illegal 4-byte read of

address 0xBFFFF74C, which, as far as Memcheck can tell, is not a valid

stack address, nor corresponds to any current heap blocks or recently freed

heap blocks. The read is happening at line 45 of

bogon.cpp, called from line 66 of the same file,

etc. For errors associated with an identified (current or freed) heap block,

for example reading freed memory, Valgrind reports not only the

location where the error happened, but also where the associated heap block

was allocated/freed.

Valgrind remembers all error reports. When an error is detected, it is compared against old reports, to see if it is a duplicate. If so, the error is noted, but no further commentary is emitted. This avoids you being swamped with bazillions of duplicate error reports.

If you want to know how many times each error occurred, run with

the -v option. When execution finishes, all the

reports are printed out, along with, and sorted by, their occurrence

counts. This makes it easy to see which errors have occurred most

frequently.

Errors are reported before the associated operation actually happens. For example, if you're using Memcheck and your program attempts to read from address zero, Memcheck will emit a message to this effect, and your program will then likely die with a segmentation fault.

In general, you should try and fix errors in the order that they are reported. Not doing so can be confusing. For example, a program which copies uninitialised values to several memory locations, and later uses them, will generate several error messages, when run on Memcheck. The first such error message may well give the most direct clue to the root cause of the problem.

The process of detecting duplicate errors is quite an

expensive one and can become a significant performance overhead

if your program generates huge quantities of errors. To avoid

serious problems, Valgrind will simply stop collecting

errors after 1,000 different errors have been seen, or 10,000,000 errors

in total have been seen. In this situation you might as well

stop your program and fix it, because Valgrind won't tell you

anything else useful after this. Note that the 1,000/10,000,000 limits

apply after suppressed errors are removed. These limits are

defined in m_errormgr.c and can be increased

if necessary.

To avoid this cutoff you can use the

--error-limit=no option. Then Valgrind will always show

errors, regardless of how many there are. Use this option carefully,

since it may have a bad effect on performance.

The error-checking tools detect numerous problems in the system

libraries, such as the C library,

which come pre-installed with your OS. You can't easily fix

these, but you don't want to see these errors (and yes, there are many!)

So Valgrind reads a list of errors to suppress at startup. A default

suppression file is created by the

./configure script when the system is

built.

You can modify and add to the suppressions file at your leisure, or, better, write your own. Multiple suppression files are allowed. This is useful if part of your project contains errors you can't or don't want to fix, yet you don't want to continuously be reminded of them.

Note: By far the easiest way to add

suppressions is to use the --gen-suppressions=yes option

described in Core Command-line Options. This generates

suppressions automatically. For best results,

though, you may want to edit the output

of --gen-suppressions=yes by hand, in which

case it would be advisable to read through this section.

Each error to be suppressed is described very specifically, to minimise the possibility that a suppression-directive inadvertently suppresses a bunch of similar errors which you did want to see. The suppression mechanism is designed to allow precise yet flexible specification of errors to suppress.

If you use the -v option, at the end of execution,

Valgrind prints out one line for each used suppression, giving the number of times

it got used, its name and the filename and line number where the suppression is

defined. Depending on the suppression kind, the filename and line number are optionally

followed by additional information (such as the number of blocks and bytes suppressed

by a Memcheck leak suppression). Here's the suppressions used by a

run of valgrind -v --tool=memcheck ls -l:

--1610-- used_suppression: 2 dl-hack3-cond-1 /usr/lib/valgrind/default.supp:1234 --1610-- used_suppression: 2 glibc-2.5.x-on-SUSE-10.2-(PPC)-2a /usr/lib/valgrind/default.supp:1234

Multiple suppressions files are allowed. Valgrind loads suppression

patterns from $PREFIX/lib/valgrind/default.supp unless

--default-suppressions=no has been specified. You can

ask to add suppressions from additional files by specifying

--suppressions=/path/to/file.supp one or more times.

If you want to understand more about suppressions, look at an

existing suppressions file whilst reading the following documentation.

The file glibc-2.3.supp, in the source

distribution, provides some good examples.

Blank and comment lines in a suppression file are ignored. Comment lines are made of 0 or more blanks followed by a # character followed by some text.

Each suppression has the following components:

First line: its name. This merely gives a handy name to the suppression, by which it is referred to in the summary of used suppressions printed out when a program finishes. It's not important what the name is; any identifying string will do.

Second line: name of the tool(s) that the suppression is for (if more than one, comma-separated), and the name of the suppression itself, separated by a colon (n.b.: no spaces are allowed), eg:

tool_name1,tool_name2:suppression_name

Recall that Valgrind is a modular system, in which different instrumentation tools can observe your program whilst it is running. Since different tools detect different kinds of errors, it is necessary to say which tool(s) the suppression is meaningful to.

Tools will complain, at startup, if a tool does not understand any suppression directed to it. Tools ignore suppressions which are not directed to them. As a result, it is quite practical to put suppressions for all tools into the same suppression file.

Next line: a small number of suppression types have extra

information after the second line (eg. the Param

suppression for Memcheck)

Remaining lines: This is the calling context for the error -- the chain of function calls that led to it. There can be up to 24 of these lines.

Locations may be names of either shared objects, functions,

or source lines. They begin with

obj:,

fun:, or

src: respectively. Function,

object, and file names to match against may use the wildcard characters

* and

?. Source lines are specified

using the form filename[:lineNumber].

Important note: C++ function names must be

mangled. If you are writing suppressions by

hand, use the --demangle=no option to get the

mangled names in your error messages. An example of a mangled

C++ name is _ZN9QListView4showEv.

This is the form that the GNU C++ compiler uses internally, and

the form that must be used in suppression files. The equivalent

demangled name, QListView::show(),

is what you see at the C++ source code level.

A location line may also be

simply "..." (three dots). This is

a frame-level wildcard, which matches zero or more frames. Frame

level wildcards are useful because they make it easy to ignore

varying numbers of uninteresting frames in between frames of

interest. That is often important when writing suppressions which

are intended to be robust against variations in the amount of

function inlining done by compilers.

Finally, the entire suppression must be between curly braces. Each brace must be the first character on its own line.

A suppression only suppresses an error when the error matches all the details in the suppression. Here's an example:

{

__gconv_transform_ascii_internal/__mbrtowc/mbtowc

Memcheck:Value4

fun:__gconv_transform_ascii_internal

fun:__mbr*toc

fun:mbtowc

}

What it means is: for Memcheck only, suppress a

use-of-uninitialised-value error, when the data size is 4, when it

occurs in the function

__gconv_transform_ascii_internal, when

that is called from any function of name matching

__mbr*toc, when that is called from

mbtowc. It doesn't apply under any

other circumstances. The string by which this suppression is identified

to the user is

__gconv_transform_ascii_internal/__mbrtowc/mbtowc.

(See Writing suppression files for more details on the specifics of Memcheck's suppression kinds.)

Another example, again for the Memcheck tool:

{

libX11.so.6.2/libX11.so.6.2/libXaw.so.7.0

Memcheck:Value4

obj:/usr/X11R6/lib/libX11.so.6.2

obj:/usr/X11R6/lib/libX11.so.6.2

obj:/usr/X11R6/lib/libXaw.so.7.0

}

This suppresses any size 4 uninitialised-value error which occurs

anywhere in libX11.so.6.2, when called from

anywhere in the same library, when called from anywhere in

libXaw.so.7.0. The inexact specification of

locations is regrettable, but is about all you can hope for, given that

the X11 libraries shipped on the Linux distro on which this example

was made have had their symbol tables removed.

An example of the src: specification, again for the Memcheck tool:

{

libX11.so.6.2/libX11.so.6.2/libXaw.so.7.0

Memcheck:Value4

src:valid.c:321

}

This suppresses any size-4 uninitialised-value error which occurs

at line 321 in valid.c.

Although the above two examples do not make this clear, you can

freely mix obj:,

fun:, and

src:

lines in a suppression.

Finally, here's an example using three frame-level wildcards:

{

a-contrived-example

Memcheck:Leak

fun:malloc

...

fun:ddd

...

fun:ccc

...

fun:main

}

This suppresses Memcheck memory-leak errors, in the case where

the allocation was done by main

calling (though any number of intermediaries, including zero)

ccc,

calling onwards via

ddd and eventually

to malloc..

Valgrind supports the downloading of debuginfo files via debuginfod, an HTTP server for distributing ELF/DWARF debugging information. When a debuginfo file cannot be found locally, Valgrind is able to query debuginfod servers for the file using its build-id.

In order to use this feature

debuginfod-find must be installed and

$DEBUGINFOD_URLS must contain URLs of

debuginfod servers. Valgrind does not support

debuginfod-find verbose output that is

normally enabled with $DEBUGINFOD_PROGRESS

and $DEBUGINFOD_VERBOSE. These environment

variables will be ignored.

For more information regarding debuginfod, see https://sourceware.org/elfutils/Debuginfod.html

As mentioned above, Valgrind's core accepts a common set of options. The tools also accept tool-specific options, which are documented separately for each tool.

Valgrind's default settings succeed in giving reasonable behaviour in most cases. We group the available options by rough categories.

These options work with all tools.

-h --help

Show help for all options, both for the core and for the

selected tool. If the option is repeated it is equivalent to giving

--help-debug.

--help-debug

Same as --help, but also lists debugging

options which usually are only of use to Valgrind's

developers.

--version

Show the version number of the Valgrind core. Tools can have their own version numbers. There is a scheme in place to ensure that tools only execute when the core version is one they are known to work with. This was done to minimise the chances of strange problems arising from tool-vs-core version incompatibilities.

-q, --quiet

Run silently, and only print error messages. Useful if you are running regression tests or have some other automated test machinery.

-v, --verbose

Be more verbose. Gives extra information on various aspects of your program, such as: the shared objects loaded, the suppressions used, the progress of the instrumentation and execution engines, and warnings about unusual behaviour. Repeating the option increases the verbosity level.

--trace-children=<yes|no> [default: no]

When enabled, Valgrind will trace into sub-processes

initiated via the exec system call. This is

necessary for multi-process programs.

Note that Valgrind does trace into the child of a

fork (it would be difficult not to, since

fork makes an identical copy of a process), so this

option is arguably badly named. However, most children of

fork calls immediately call exec

anyway.

--trace-children-skip=patt1,patt2,...

This option only has an effect when

--trace-children=yes is specified. It allows

for some children to be skipped. The option takes a comma

separated list of patterns for the names of child executables

that Valgrind should not trace into. Patterns may include the

metacharacters ?

and *, which have the usual

meaning.

This can be useful for pruning uninteresting branches from a tree of processes being run on Valgrind. But you should be careful when using it. When Valgrind skips tracing into an executable, it doesn't just skip tracing that executable, it also skips tracing any of that executable's child processes. In other words, the flag doesn't merely cause tracing to stop at the specified executables -- it skips tracing of entire process subtrees rooted at any of the specified executables.

--trace-children-skip-by-arg=patt1,patt2,...

This is the same as

--trace-children-skip, with one difference:

the decision as to whether to trace into a child process is

made by examining the arguments to the child process, rather

than the name of its executable.

--child-silent-after-fork=<yes|no> [default: no]

When enabled, Valgrind will not show any debugging or

logging output for the child process resulting from

a fork call. This can make the output less

confusing (although more misleading) when dealing with processes

that create children. It is particularly useful in conjunction

with --trace-children=. Use of this option is also

strongly recommended if you are requesting XML output

(--xml=yes), since otherwise the XML from child and

parent may become mixed up, which usually makes it useless.

--vgdb=<no|yes|full> [default: yes]

Valgrind will provide "gdbserver" functionality when

--vgdb=yes or --vgdb=full is

specified. This allows an external GNU GDB debugger to control

and debug your program when it runs on Valgrind.

--vgdb=full incurs significant performance

overheads, but provides more precise breakpoints and

watchpoints. See Debugging your program using Valgrind's gdbserver and GDB for

a detailed description.

If the embedded gdbserver is enabled but no gdb is currently being used, the vgdb command line utility can send "monitor commands" to Valgrind from a shell. The Valgrind core provides a set of Valgrind monitor commands. A tool can optionally provide tool specific monitor commands, which are documented in the tool specific chapter.

--vgdb-error=<number> [default: 999999999]

Use this option when the Valgrind gdbserver is enabled with

--vgdb=yes or --vgdb=full.

Tools that report errors will wait

for "number" errors to be

reported before freezing the program and waiting for you to

connect with GDB. It follows that a value of zero will cause

the gdbserver to be started before your program is executed.

This is typically used to insert GDB breakpoints before

execution, and also works with tools that do not report

errors, such as Massif.

--vgdb-stop-at=<set> [default: none]

Use this option when the Valgrind gdbserver is enabled with

--vgdb=yes or --vgdb=full.

The Valgrind gdbserver will be invoked for each error after

--vgdb-error have been reported.

You can additionally ask the Valgrind gdbserver to be invoked

for other events, specified in one of the following ways:

a comma separated list of one or more of

startup exit valgrindabexit.

The values startup exit

valgrindabexit respectively indicate to

invoke gdbserver before your program is executed, after the

last instruction of your program, on Valgrind abnormal exit

(e.g. internal error, out of memory, ...).

Note: startup and

--vgdb-error=0 will both cause Valgrind

gdbserver to be invoked before your program is executed. The

--vgdb-error=0 will in addition cause your

program to stop on all subsequent errors.

all to specify the complete set.

It is equivalent to

--vgdb-stop-at=startup,exit,valgrindabexit.

none for the empty set.

--track-fds=<yes|no|all> [default: no]

When enabled, Valgrind will print out a list of open file

descriptors on exit or on request, via the gdbserver monitor

command v.info open_fds. Along with each

file descriptor is printed a stack backtrace of where the file

was opened and any details relating to the file descriptor such

as the file name or socket details. Use all to

include reporting on stdin,

stdout and

stderr.

--time-stamp=<yes|no> [default: no]

When enabled, each message is preceded with an indication of the elapsed wallclock time since startup, expressed as days, hours, minutes, seconds and milliseconds.

--log-fd=<number> [default: 2, stderr]

Specifies that Valgrind should send all of its messages to the specified file descriptor. The default, 2, is the standard error channel (stderr). Note that this may interfere with the client's own use of stderr, as Valgrind's output will be interleaved with any output that the client sends to stderr.

--log-file=<filename>

Specifies that Valgrind should send all of its messages to the specified file. If the file name is empty, it causes an abort. There are three special format specifiers that can be used in the file name.

%p is replaced with the current process ID.

This is very useful for program that invoke multiple processes.

WARNING: If you use --trace-children=yes and your

program invokes multiple processes OR your program forks without

calling exec afterwards, and you don't use this specifier

(or the %q specifier below), the Valgrind output from

all those processes will go into one file, possibly jumbled up, and

possibly incomplete. Note: If the program forks and calls exec afterwards,

Valgrind output of the child from the period between fork and exec

will be lost. Fortunately this gap is really tiny for most programs;

and modern programs use posix_spawn

anyway.

%n is replaced with a file sequence number

unique for this process.

This is useful for processes that produces several files

from the same filename template.

%q{FOO} is replaced with the contents of the

environment variable FOO. If the

{FOO} part is malformed, it causes an abort. This

specifier is rarely needed, but very useful in certain circumstances

(eg. when running MPI programs). The idea is that you specify a

variable which will be set differently for each process in the job,

for example BPROC_RANK or whatever is

applicable in your MPI setup. If the named environment variable is not

set, it causes an abort. Note that in some shells, the

{ and } characters may need to be

escaped with a backslash.

%% is replaced with %.

If an % is followed by any other character, it

causes an abort.

If the file name specifies a relative file name, it is put in the program's initial working directory: this is the current directory when the program started its execution after the fork or after the exec. If it specifies an absolute file name (ie. starts with '/') then it is put there.

--log-socket=<ip-address:port-number>

Specifies that Valgrind should send all of its messages to

the specified port at the specified IP address. The port may be

omitted, in which case port 1500 is used. If a connection cannot

be made to the specified socket, Valgrind falls back to writing

output to the standard error (stderr). This option is intended to

be used in conjunction with the

valgrind-listener program. For

further details, see

the commentary

in the manual.

These options are used by all tools that can report errors, e.g. Memcheck, but not Cachegrind.

--xml=<yes|no> [default: no]

When enabled, the important parts of the output (e.g. tool error

messages) will be in XML format rather than plain text. Furthermore,

the XML output will be sent to a different output channel than the

plain text output. Therefore, you also must use one of

--xml-fd, --xml-file or

--xml-socket to specify where the XML is to be sent.

Less important messages will still be printed in plain text, but

because the XML output and plain text output are sent to different

output channels (the destination of the plain text output is still

controlled by --log-fd, --log-file

and --log-socket) this should not cause problems.

This option is aimed at making life easier for tools that consume

Valgrind's output as input, such as GUI front ends. Currently this

option works with Memcheck, Helgrind and DRD. The output format is

specified in the file

docs/internals/xml-output-protocol4.txt

in the source tree for Valgrind 3.5.0 or later.

The recommended options for a GUI to pass, when requesting

XML output, are: --xml=yes to enable XML output,

--xml-file to send the XML output to a (presumably

GUI-selected) file, --log-file to send the plain

text output to a second GUI-selected file,

--child-silent-after-fork=yes, and

-q to restrict the plain text output to critical

error messages created by Valgrind itself. For example, failure to

read a specified suppressions file counts as a critical error message.

In this way, for a successful run the text output file will be empty.

But if it isn't empty, then it will contain important information

which the GUI user should be made aware

of.

--xml-fd=<number> [default: -1, disabled]

Specifies that Valgrind should send its XML output to the

specified file descriptor. It must be used in conjunction with

--xml=yes.

--xml-file=<filename>

Specifies that Valgrind should send its XML output

to the specified file. It must be used in conjunction with

--xml=yes. Any %p or

%q sequences appearing in the filename are expanded

in exactly the same way as they are for --log-file.

See the description of --log-file for details.

--xml-socket=<ip-address:port-number>

Specifies that Valgrind should send its XML output the

specified port at the specified IP address. It must be used in

conjunction with --xml=yes. The form of the argument

is the same as that used by --log-socket.

See the description of --log-socket

for further details.

--xml-user-comment=<string>

Embeds an extra user comment string at the start of the XML

output. Only works when --xml=yes is specified;

ignored otherwise.

--demangle=<yes|no> [default: yes]

Enable/disable automatic demangling (decoding) of C++ names. Enabled by default. When enabled, Valgrind will attempt to translate encoded C++ names back to something approaching the original. The demangler handles symbols mangled by g++ versions 2.X, 3.X and 4.X.

An important fact about demangling is that function names mentioned in suppressions files should be in their mangled form. Valgrind does not demangle function names when searching for applicable suppressions, because to do otherwise would make suppression file contents dependent on the state of Valgrind's demangling machinery, and also slow down suppression matching.

--num-callers=<number> [default: 12]

Specifies the maximum number of entries shown in stack traces that identify program locations. Note that errors are commoned up using only the top four function locations (the place in the current function, and that of its three immediate callers). So this doesn't affect the total number of errors reported.

The maximum value for this is 500. Note that higher settings will make Valgrind run a bit more slowly and take a bit more memory, but can be useful when working with programs with deeply-nested call chains.

--unw-stack-scan-thresh=<number> [default: 0]

,

--unw-stack-scan-frames=<number> [default: 5]

Stack-scanning support is available only on ARM targets.

These flags enable and control stack unwinding by stack scanning. When the normal stack unwinding mechanisms -- usage of Dwarf CFI records, and frame-pointer following -- fail, stack scanning may be able to recover a stack trace.

Note that stack scanning is an imprecise, heuristic mechanism that may give very misleading results, or none at all. It should be used only in emergencies, when normal unwinding fails, and it is important to nevertheless have stack traces.

Stack scanning is a simple technique: the unwinder reads words from the stack, and tries to guess which of them might be return addresses, by checking to see if they point just after ARM or Thumb call instructions. If so, the word is added to the backtrace.

The main danger occurs when a function call returns, leaving its return address exposed, and a new function is called, but the new function does not overwrite the old address. The result of this is that the backtrace may contain entries for functions which have already returned, and so be very confusing.

A second limitation of this implementation is that it will scan only the page (4KB, normally) containing the starting stack pointer. If the stack frames are large, this may result in only a few (or not even any) being present in the trace. Also, if you are unlucky and have an initial stack pointer near the end of its containing page, the scan may miss all interesting frames.

By default stack scanning is disabled. The normal use

case is to ask for it when a stack trace would otherwise be very

short. So, to enable it,

use --unw-stack-scan-thresh=number.

This requests Valgrind to try using stack scanning to "extend"

stack traces which contain fewer

than number frames.

If stack scanning does take place, it will only generate

at most the number of frames specified

by --unw-stack-scan-frames.

Typically, stack scanning generates so many garbage entries that

this value is set to a low value (5) by default. In no case

will a stack trace larger than the value specified

by --num-callers be

created.

--error-limit=<yes|no> [default: yes]

When enabled, Valgrind stops reporting errors after 10,000,000 in total, or 1,000 different ones, have been seen. This is to stop the error tracking machinery from becoming a huge performance overhead in programs with many errors.

--error-exitcode=<number> [default: 0]

Specifies an alternative exit code to return if Valgrind reported any errors in the run. When set to the default value (zero), the return value from Valgrind will always be the return value of the process being simulated. When set to a nonzero value, that value is returned instead, if Valgrind detects any errors. This is useful for using Valgrind as part of an automated test suite, since it makes it easy to detect test cases for which Valgrind has reported errors, just by inspecting return codes.

--exit-on-first-error=<yes|no> [default: no]

If this option is enabled, Valgrind exits on the first error.

A nonzero exit value must be defined using

--error-exitcode option.

Useful if you are running regression tests or have some other

automated test machinery.

--error-markers=<begin>,<end> [default: none]

When errors are output as plain text (i.e. XML not used),

--error-markers instructs to output a line

containing the begin (end)

string before (after) each error.

Such marker lines facilitate searching for errors and/or extracting errors in an output file that contain valgrind errors mixed with the program output.

Note that empty markers are accepted. So, only using a begin (or an end) marker is possible.

--show-error-list=no|yes [default: no]

If this option is enabled, for tools that report errors, valgrind will show the list of detected errors and the list of used suppressions at exit.

Note that at verbosity 2 and above, valgrind automatically shows

the list of detected errors and the list of used suppressions

at exit, unless --show-error-list=no is selected.

-s

Specifying -s is equivalent to

--show-error-list=yes.

--sigill-diagnostics=<yes|no> [default: yes]

Enable/disable printing of illegal instruction diagnostics.

Enabled by default, but defaults to disabled when

--quiet is given. The default can always be explicitly

overridden by giving this option.

When enabled, a warning message will be printed, along with some diagnostics, whenever an instruction is encountered that Valgrind cannot decode or translate, before the program is given a SIGILL signal. Often an illegal instruction indicates a bug in the program or missing support for the particular instruction in Valgrind. But some programs do deliberately try to execute an instruction that might be missing and trap the SIGILL signal to detect processor features. Using this flag makes it possible to avoid the diagnostic output that you would otherwise get in such cases.

--keep-debuginfo=<yes|no> [default: no]

When enabled, keep ("archive") symbols and all other debuginfo for unloaded code. This allows saved stack traces to include file/line info for code that has been dlclose'd (or similar). Be careful with this, since it can lead to unbounded memory use for programs which repeatedly load and unload shared objects.

Some tools and some functionalities have only limited support

for archived debug info. Memcheck fully supports it. Generally,

tools that report errors can use archived debug info to show the error

stack traces. The known limitations are: Helgrind's past access stack

trace of a race condition is does not use archived debug info. Massif

(and more generally the xtree Massif output format) does not make use

of archived debug info. Only Memcheck has been (somewhat) tested

with --keep-debuginfo=yes, so other tools may have

unknown limitations.

--show-below-main=<yes|no> [default: no]

By default, stack traces for errors do not show any

functions that appear beneath main because

most of the time it's uninteresting C library stuff and/or

gobbledygook. Alternatively, if main is not

present in the stack trace, stack traces will not show any functions

below main-like functions such as glibc's

__libc_start_main. Furthermore, if

main-like functions are present in the trace,

they are normalised as (below main), in order to

make the output more deterministic.

If this option is enabled, all stack trace entries will be

shown and main-like functions will not be

normalised.

--fullpath-after=<string>

[default: don't show source paths]

By default Valgrind only shows the filenames in stack

traces, but not full paths to source files. When using Valgrind

in large projects where the sources reside in multiple different

directories, this can be inconvenient.

--fullpath-after provides a flexible solution

to this problem. When this option is present, the path to each

source file is shown, with the following all-important caveat:

if string is found in the path, then the path

up to and including string is omitted, else the

path is shown unmodified. Note that string is

not required to be a prefix of the path.

For example, consider a file named

/home/janedoe/blah/src/foo/bar/xyzzy.c.

Specifying --fullpath-after=/home/janedoe/blah/src/

will cause Valgrind to show the name

as foo/bar/xyzzy.c.

Because the string is not required to be a prefix,

--fullpath-after=src/ will produce the same

output. This is useful when the path contains arbitrary

machine-generated characters. For example, the

path

/my/build/dir/C32A1B47/blah/src/foo/xyzzy

can be pruned to foo/xyzzy

using

--fullpath-after=/blah/src/.

If you simply want to see the full path, just specify an

empty string: --fullpath-after=. This isn't a

special case, merely a logical consequence of the above rules.

Finally, you can use --fullpath-after

multiple times. Any appearance of it causes Valgrind to switch

to producing full paths and applying the above filtering rule.

Each produced path is compared against all

the --fullpath-after-specified strings, in the

order specified. The first string to match causes the path to

be truncated as described above. If none match, the full path

is shown. This facilitates chopping off prefixes when the

sources are drawn from a number of unrelated directories.

--extra-debuginfo-path=<path> [default: undefined and unused]

By default Valgrind searches in several well-known paths

for debug objects, such

as /usr/lib/debug/.

However, there may be scenarios where you may wish to put debug objects at an arbitrary location, such as external storage when running Valgrind on a mobile device with limited local storage. Another example might be a situation where you do not have permission to install debug object packages on the system where you are running Valgrind.

In these scenarios, you may provide an absolute path as an extra,

final place for Valgrind to search for debug objects by specifying

--extra-debuginfo-path=/path/to/debug/objects.

The given path will be prepended to the absolute path name of

the searched-for object. For example, if Valgrind is looking

for the debuginfo

for /w/x/y/zz.so

and --extra-debuginfo-path=/a/b/c is specified,

it will look for a debug object at

/a/b/c/w/x/y/zz.so.

This flag should only be specified once. If it is specified multiple times, only the last instance is honoured.

--debuginfo-server=ipaddr:port [default: undefined and unused]

This is a new, experimental, feature introduced in version 3.9.0.

In some scenarios it may be convenient to read debuginfo

from objects stored on a different machine. With this flag,

Valgrind will query a debuginfo server running

on ipaddr and listening on

port port, if it cannot find

the debuginfo object in the local filesystem.

The debuginfo server must accept TCP connections on

port port. The debuginfo

server is contained in the source

file auxprogs/valgrind-di-server.c.

It will only serve from the directory it is started

in. port defaults to 1500 in

both client and server if not specified.

If Valgrind looks for the debuginfo for

/w/x/y/zz.so by using the

debuginfo server, it will strip the pathname components and

merely request zz.so on the

server. That in turn will look only in its current working

directory for a matching debuginfo object.

The debuginfo data is transmitted in small fragments (8 KB) as requested by Valgrind. Each block is compressed using LZO to reduce transmission time. The implementation has been tuned for best performance over a single-stage 802.11g (WiFi) network link.

Note that checks for matching primary vs debug objects,

using GNU debuglink CRC scheme, are performed even when using

the debuginfo server. To disable such checking, you need to

also specify

--allow-mismatched-debuginfo=yes.

By default the Valgrind build system will

build valgrind-di-server for

the target platform, which is almost certainly not what you

want. So far we have been unable to find out how to get

automake/autoconf to build it for the build platform. If

you want to use it, you will have to recompile it by hand using

the command shown at the top

of auxprogs/valgrind-di-server.c.

Valgrind can also download debuginfo via debuginfod. See the DEBUGINFOD section for more information.

--allow-mismatched-debuginfo=no|yes [no]

When reading debuginfo from separate debuginfo objects, Valgrind will by default check that the main and debuginfo objects match, using the GNU debuglink mechanism. This guarantees that it does not read debuginfo from out of date debuginfo objects, and also ensures that Valgrind can't crash as a result of mismatches.

This check can be overridden using

--allow-mismatched-debuginfo=yes.

This may be useful when the debuginfo and main objects have not

been split in the proper way. Be careful when using this,

though: it disables all consistency checking, and Valgrind has

been observed to crash when the main and debuginfo objects don't

match.

--suppressions=<filename> [default: $PREFIX/lib/valgrind/default.supp]

Specifies an extra file from which to read descriptions of errors to suppress. You may use up to 100 extra suppression files.

--gen-suppressions=<yes|no|all> [default: no]

When set to yes, Valgrind will pause

after every error shown and print the line:

---- Print suppression ? --- [Return/N/n/Y/y/C/c] ----

Pressing Ret, or N Ret or

n Ret, causes Valgrind continue execution without

printing a suppression for this error.

Pressing Y Ret or

y Ret causes Valgrind to write a suppression

for this error. You can then cut and paste it into a suppression file

if you don't want to hear about the error in the future.

When set to all, Valgrind will print a

suppression for every reported error, without querying the

user.

This option is particularly useful with C++ programs, as it prints out the suppressions with mangled names, as required.

Note that the suppressions printed are as specific as possible. You may want to common up similar ones, by adding wildcards to function names, and by using frame-level wildcards. The wildcarding facilities are powerful yet flexible, and with a bit of careful editing, you may be able to suppress a whole family of related errors with only a few suppressions.

Sometimes two different errors

are suppressed by the same suppression, in which case Valgrind

will output the suppression more than once, but you only need to

have one copy in your suppression file (but having more than one

won't cause problems). Also, the suppression name is given as

<insert a suppression name

here>; the name doesn't really matter, it's

only used with the -v option which prints out all

used suppression records.

--input-fd=<number> [default: 0, stdin]

When using

--gen-suppressions=yes, Valgrind will stop so as

to read keyboard input from you when each error occurs. By

default it reads from the standard input (stdin), which is

problematic for programs which close stdin. This option allows

you to specify an alternative file descriptor from which to read

input.

--dsymutil=no|yes [yes]

This option is only relevant when running Valgrind on Mac OS X.

Mac OS X uses a deferred debug information (debuginfo)

linking scheme. When object files containing debuginfo are

linked into a .dylib or an

executable, the debuginfo is not copied into the final file.

Instead, the debuginfo must be linked manually by

running dsymutil, a

system-provided utility, on the executable

or .dylib. The resulting

combined debuginfo is placed in a directory alongside the

executable or .dylib, but with

the extension .dSYM.

With --dsymutil=no, Valgrind

will detect cases where the

.dSYM directory is either

missing, or is present but does not appear to match the

associated executable or .dylib,

most likely because it is out of date. In these cases, Valgrind

will print a warning message but take no further action.

With --dsymutil=yes, Valgrind

will, in such cases, automatically

run dsymutil as necessary to

bring the debuginfo up to date. For all practical purposes, if

you always use --dsymutil=yes, then

there is never any need to

run dsymutil manually or as part

of your applications's build system, since Valgrind will run it

as necessary.

Valgrind will not attempt to

run dsymutil on any

executable or library in

/usr/,

/bin/,

/sbin/,

/opt/,

/sw/,

/System/,

/Library/ or

/Applications/

since dsymutil will always fail

in such situations. It fails both because the debuginfo for

such pre-installed system components is not available anywhere,

and also because it would require write privileges in those

directories.

Be careful when

using --dsymutil=yes, since it will

cause pre-existing .dSYM

directories to be silently deleted and re-created. Also note that

dsymutil is quite slow, sometimes

excessively so.

--max-stackframe=<number> [default: 2000000]

The maximum size of a stack frame. If the stack pointer moves by more than this amount then Valgrind will assume that the program is switching to a different stack.

You may need to use this option if your program has large stack-allocated arrays. Valgrind keeps track of your program's stack pointer. If it changes by more than the threshold amount, Valgrind assumes your program is switching to a different stack, and Memcheck behaves differently than it would for a stack pointer change smaller than the threshold. Usually this heuristic works well. However, if your program allocates large structures on the stack, this heuristic will be fooled, and Memcheck will subsequently report large numbers of invalid stack accesses. This option allows you to change the threshold to a different value.

You should only consider use of this option if Valgrind's debug output directs you to do so. In that case it will tell you the new threshold you should specify.

In general, allocating large structures on the stack is a bad idea, because you can easily run out of stack space, especially on systems with limited memory or which expect to support large numbers of threads each with a small stack, and also because the error checking performed by Memcheck is more effective for heap-allocated data than for stack-allocated data. If you have to use this option, you may wish to consider rewriting your code to allocate on the heap rather than on the stack.

--main-stacksize=<number>

[default: use current 'ulimit' value]

Specifies the size of the main thread's stack.

To simplify its memory management, Valgrind reserves all required space for the main thread's stack at startup. That means it needs to know the required stack size at startup.

By default, Valgrind uses the current "ulimit" value for the stack size, or 16 MB, whichever is lower. In many cases this gives a stack size in the range 8 to 16 MB, which almost never overflows for most applications.

If you need a larger total stack size,

use --main-stacksize to specify it. Only set

it as high as you need, since reserving far more space than you

need (that is, hundreds of megabytes more than you need)

constrains Valgrind's memory allocators and may reduce the total

amount of memory that Valgrind can use. This is only really of

significance on 32-bit machines.

On Linux, you may request a stack of size up to 2GB. Valgrind will stop with a diagnostic message if the stack cannot be allocated.

--main-stacksize only affects the stack

size for the program's initial thread. It has no bearing on the

size of thread stacks, as Valgrind does not allocate

those.

You may need to use both --main-stacksize

and --max-stackframe together. It is important

to understand that --main-stacksize sets the

maximum total stack size,

whilst --max-stackframe specifies the largest

size of any one stack frame. You will have to work out

the --main-stacksize value for yourself

(usually, if your applications segfaults). But Valgrind will

tell you the needed --max-stackframe size, if

necessary.

As discussed further in the description

of --max-stackframe, a requirement for a large

stack is a sign of potential portability problems. You are best

advised to place all large data in heap-allocated memory.

--max-threads=<number> [default: 500]

By default, Valgrind can handle to up to 500 threads.

Occasionally, that number is too small. Use this option to

provide a different limit. E.g.

--max-threads=3000.

For tools that use their own version of

malloc (e.g. Memcheck,

Massif, Helgrind, DRD), the following options apply.

--alignment=<number> [default: 8 or 16, depending on the platform]

By default Valgrind's malloc,

realloc, etc, return a block whose starting

address is 8-byte aligned or 16-byte aligned (the value depends on the

platform and matches the platform default). This option allows you to

specify a different alignment. The supplied value must be greater

than or equal to the default, less than or equal to 4096, and must be

a power of two.

--redzone-size=<number> [default: depends on the tool]

Valgrind's malloc, realloc, etc, add

padding blocks before and after each heap block allocated by the

program being run. Such padding blocks are called redzones. The

default value for the redzone size depends on the tool. For

example, Memcheck adds and protects a minimum of 16 bytes before

and after each block allocated by the client. This allows it to

detect block underruns or overruns of up to 16 bytes.

Increasing the redzone size makes it possible to detect overruns of larger distances, but increases the amount of memory used by Valgrind. Decreasing the redzone size will reduce the memory needed by Valgrind but also reduces the chances of detecting over/underruns, so is not recommended.

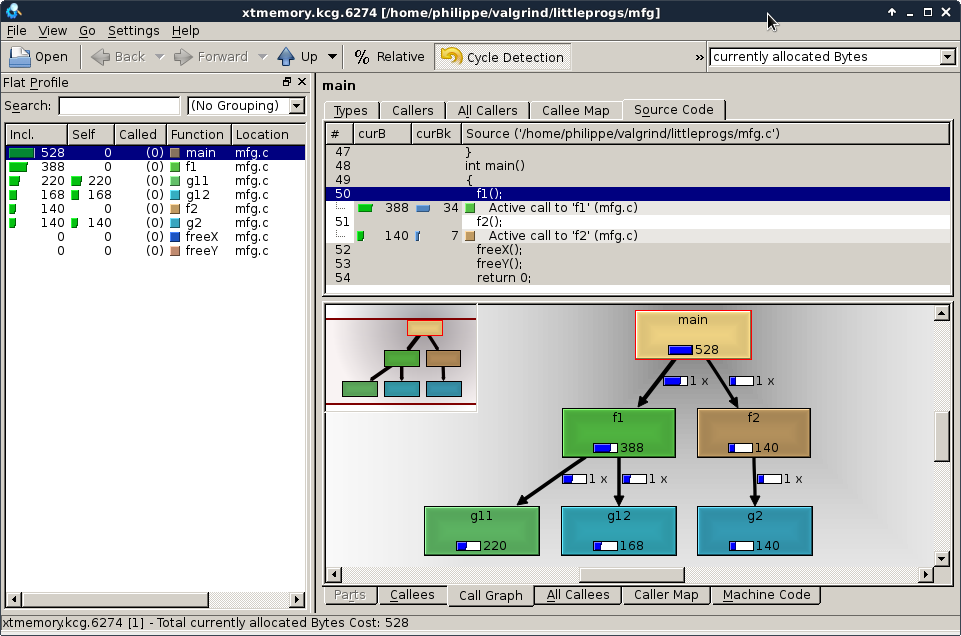

--xtree-memory=none|allocs|full [none]

Tools replacing Valgrind's malloc,

realloc, etc, can optionally produce an execution

tree detailing which piece of code is responsible for heap

memory usage. See Execution Trees

for a detailed explanation about execution trees.

When set to none, no memory execution

tree is produced.

When set to allocs, the memory

execution tree gives the current number of allocated bytes and

the current number of allocated blocks.

When set to full, the memory execution

tree gives 6 different measurements : the current number of

allocated bytes and blocks (same values as

for allocs), the total number of allocated

bytes and blocks, the total number of freed bytes and

blocks.

Note that the overhead in cpu and memory to produce

an xtree depends on the tool. The overhead in cpu is small for

the value allocs, as the information needed

to produce this report is maintained in any case by the tool.

For massif and helgrind, specifying full

implies to capture a stack trace for each free operation,

while normally these tools only capture an allocation stack

trace. For Memcheck, the cpu overhead for the

value full is small, as this can only be

used in combination with

--keep-stacktraces=alloc-and-free or

--keep-stacktraces=alloc-then-free, which

already records a stack trace for each free operation. The

memory overhead varies between 5 and 10 words per unique

stacktrace in the xtree, plus the memory needed to record the

stack trace for the free operations, if needed specifically

for the xtree.

--xtree-memory-file=<filename> [default:

xtmemory.kcg.%p]

Specifies that Valgrind should produce the xtree memory

report in the specified file. Any %p or

%q sequences appearing in the filename are expanded

in exactly the same way as they are for --log-file.

See the description of --log-file

for details.

If the filename contains the extension .ms,

then the produced file format will be a massif output file format.

If the filename contains the extension .kcg

or no extension is provided or recognised,

then the produced file format will be a callgrind output format.

See Execution Trees for a detailed explanation about execution trees formats.

These options apply to all tools, as they affect certain obscure workings of the Valgrind core. Most people won't need to use them.

--smc-check=<none|stack|all|all-non-file>

[default: all-non-file for x86/amd64/s390x, stack for other archs]

This option controls Valgrind's detection of self-modifying code. If no checking is done, when a program executes some code, then overwrites it with new code, and executes the new code, Valgrind will continue to execute the translations it made for the old code. This will likely lead to incorrect behaviour and/or crashes.

For "modern" architectures -- anything that's not x86,

amd64 or s390x -- the default is stack.

This is because a correct program must take explicit action

to reestablish D-I cache coherence following code

modification. Valgrind observes and honours such actions,

with the result that self-modifying code is transparently

handled with zero extra cost.

For x86, amd64 and s390x, the program is not required to

notify the hardware of required D-I coherence syncing. Hence

the default is all-non-file, which covers

the normal case of generating code into an anonymous

(non-file-backed) mmap'd area.

The meanings of the four available settings are as

follows. No detection (none),

detect self-modifying code

on the stack (which is used by GCC to implement nested

functions) (stack), detect self-modifying code

everywhere (all), and detect

self-modifying code everywhere except in file-backed

mappings (all-non-file).

Running with all will slow Valgrind

down noticeably. Running with none will

rarely speed things up, since very little code gets

dynamically generated in most programs. The

VALGRIND_DISCARD_TRANSLATIONS client

request is an alternative to --smc-check=all

and --smc-check=all-non-file

that requires more programmer effort but allows Valgrind to run

your program faster, by telling it precisely when translations

need to be re-made.

--smc-check=all-non-file provides a

cheaper but more limited version

of --smc-check=all. It adds checks to any

translations that do not originate from file-backed memory

mappings. Typical applications that generate code, for example

JITs in web browsers, generate code into anonymous mmaped areas,

whereas the "fixed" code of the browser always lives in

file-backed mappings. --smc-check=all-non-file

takes advantage of this observation, limiting the overhead of

checking to code which is likely to be JIT generated.

--read-inline-info=<yes|no> [default: see below]

When enabled, Valgrind will read information about inlined

function calls from DWARF3 debug info. This slows Valgrind

startup and makes it use more memory (typically for each inlined

piece of code, 6 words and space for the function name), but it

results in more descriptive stacktraces. Currently,

this functionality is enabled by default only for Linux,

Android and Solaris targets and only for the tools Memcheck, Massif,

Helgrind and DRD. Here is an example of some stacktraces with

--read-inline-info=no:

==15380== Conditional jump or move depends on uninitialised value(s) ==15380== at 0x80484EA: main (inlinfo.c:6) ==15380== ==15380== Conditional jump or move depends on uninitialised value(s) ==15380== at 0x8048550: fun_noninline (inlinfo.c:6) ==15380== by 0x804850E: main (inlinfo.c:34) ==15380== ==15380== Conditional jump or move depends on uninitialised value(s) ==15380== at 0x8048520: main (inlinfo.c:6)

And here are the same errors with

--read-inline-info=yes:

==15377== Conditional jump or move depends on uninitialised value(s) ==15377== at 0x80484EA: fun_d (inlinfo.c:6) ==15377== by 0x80484EA: fun_c (inlinfo.c:14) ==15377== by 0x80484EA: fun_b (inlinfo.c:20) ==15377== by 0x80484EA: fun_a (inlinfo.c:26) ==15377== by 0x80484EA: main (inlinfo.c:33) ==15377== ==15377== Conditional jump or move depends on uninitialised value(s) ==15377== at 0x8048550: fun_d (inlinfo.c:6) ==15377== by 0x8048550: fun_noninline (inlinfo.c:41) ==15377== by 0x804850E: main (inlinfo.c:34) ==15377== ==15377== Conditional jump or move depends on uninitialised value(s) ==15377== at 0x8048520: fun_d (inlinfo.c:6) ==15377== by 0x8048520: main (inlinfo.c:35)

--read-var-info=<yes|no> [default: no]

When enabled, Valgrind will read information about variable types and locations from DWARF3 debug info. This slows Valgrind startup significantly and makes it use significantly more memory, but for the tools that can take advantage of it (Memcheck, Helgrind, DRD) it can result in more precise error messages. For example, here are some standard errors issued by Memcheck:

==15363== Uninitialised byte(s) found during client check request ==15363== at 0x80484A9: croak (varinfo1.c:28) ==15363== by 0x8048544: main (varinfo1.c:55) ==15363== Address 0x80497f7 is 7 bytes inside data symbol "global_i2" ==15363== ==15363== Uninitialised byte(s) found during client check request ==15363== at 0x80484A9: croak (varinfo1.c:28) ==15363== by 0x8048550: main (varinfo1.c:56) ==15363== Address 0xbea0d0cc is on thread 1's stack ==15363== in frame #1, created by main (varinfo1.c:45)

And here are the same errors with

--read-var-info=yes:

==15370== Uninitialised byte(s) found during client check request ==15370== at 0x80484A9: croak (varinfo1.c:28) ==15370== by 0x8048544: main (varinfo1.c:55) ==15370== Location 0x80497f7 is 0 bytes inside global_i2[7], ==15370== a global variable declared at varinfo1.c:41 ==15370== ==15370== Uninitialised byte(s) found during client check request ==15370== at 0x80484A9: croak (varinfo1.c:28) ==15370== by 0x8048550: main (varinfo1.c:56) ==15370== Location 0xbeb4a0cc is 0 bytes inside local var "local" ==15370== declared at varinfo1.c:46, in frame #1 of thread 1

--vgdb-poll=<number> [default: 5000]

As part of its main loop, the Valgrind scheduler will poll to check if some activity (such as an external command or some input from a gdb) has to be handled by gdbserver. This activity poll will be done after having run the given number of basic blocks (or slightly more than the given number of basic blocks). This poll is quite cheap so the default value is set relatively low. You might further decrease this value if vgdb cannot use ptrace system call to interrupt Valgrind if all threads are (most of the time) blocked in a system call.

--vgdb-shadow-registers=no|yes [default: no]

When activated, gdbserver will expose the Valgrind shadow registers to GDB. With this, the value of the Valgrind shadow registers can be examined or changed using GDB. Exposing shadow registers only works with GDB version 7.1 or later.

--vgdb-prefix=<prefix> [default: /tmp/vgdb-pipe]

To communicate with gdb/vgdb, the Valgrind gdbserver creates 3 files (2 named FIFOs and a mmap shared memory file). The prefix option controls the directory and prefix for the creation of these files.

--run-libc-freeres=<yes|no> [default: yes]

This option is only relevant when running Valgrind on Linux.

The GNU C library (libc.so), which is

used by all programs, may allocate memory for its own uses.

Usually it doesn't bother to free that memory when the program

ends—there would be no point, since the Linux kernel reclaims

all process resources when a process exits anyway, so it would

just slow things down.

The glibc authors realised that this behaviour causes leak

checkers, such as Valgrind, to falsely report leaks in glibc, when

a leak check is done at exit. In order to avoid this, they

provided a routine called __libc_freeres

specifically to make glibc release all memory it has allocated.

Memcheck therefore tries to run

__libc_freeres at exit.

Unfortunately, in some very old versions of glibc,

__libc_freeres is sufficiently buggy to cause

segmentation faults. This was particularly noticeable on Red Hat

7.1. So this option is provided in order to inhibit the run of

__libc_freeres. If your program seems to run

fine on Valgrind, but segfaults at exit, you may find that

--run-libc-freeres=no fixes that, although at the

cost of possibly falsely reporting space leaks in

libc.so.

--run-cxx-freeres=<yes|no> [default: yes]

This option is only relevant when running Valgrind on Linux or Solaris C++ programs.

The GNU Standard C++ library (libstdc++.so),

which is used by all C++ programs compiled with g++, may allocate memory

for its own uses. Usually it doesn't bother to free that memory when

the program ends—there would be no point, since the kernel reclaims

all process resources when a process exits anyway, so it would

just slow things down.

The gcc authors realised that this behaviour causes leak

checkers, such as Valgrind, to falsely report leaks in libstdc++, when

a leak check is done at exit. In order to avoid this, they

provided a routine called __gnu_cxx::__freeres

specifically to make libstdc++ release all memory it has allocated.

Memcheck therefore tries to run

__gnu_cxx::__freeres at exit.

For the sake of flexibility and unforeseen problems with

__gnu_cxx::__freeres, option

--run-cxx-freeres=no exists,

although at the cost of possibly falsely reporting space leaks in

libstdc++.so.

--sim-hints=hint1,hint2,...

Pass miscellaneous hints to Valgrind which slightly modify the simulated behaviour in nonstandard or dangerous ways, possibly to help the simulation of strange features. By default no hints are enabled. Use with caution! Currently known hints are:

lax-ioctls: Be very lax about ioctl

handling; the only assumption is that the size is

correct. Doesn't require the full buffer to be initialised

when writing. Without this, using some device drivers with a

large number of strange ioctl commands becomes very

tiresome.

fuse-compatible: Enable special

handling for certain system calls that may block in a FUSE

file-system. This may be necessary when running Valgrind

on a multi-threaded program that uses one thread to manage

a FUSE file-system and another thread to access that

file-system.

enable-outer: Enable some special

magic needed when the program being run is itself

Valgrind.

no-inner-prefix: Disable printing

a prefix > in front of each stdout or

stderr output line in an inner Valgrind being run by an

outer Valgrind. This is useful when running Valgrind

regression tests in an outer/inner setup. Note that the

prefix > will always be printed in

front of the inner debug logging lines.

no-nptl-pthread-stackcache:

This hint is only relevant when running Valgrind on Linux;

it is ignored on Solaris and Mac OS X.

The GNU glibc pthread library

(libpthread.so), which is used by

pthread programs, maintains a cache of pthread stacks.

When a pthread terminates, the memory used for the pthread

stack and some thread local storage related data structure

are not always directly released. This memory is kept in

a cache (up to a certain size), and is re-used if a new

thread is started.

This cache causes the helgrind tool to report some

false positive race condition errors on this cached

memory, as helgrind does not understand the internal glibc

cache synchronisation primitives. So, when using helgrind,

disabling the cache helps to avoid false positive race

conditions, in particular when using thread local storage

variables (e.g. variables using the

__thread qualifier).

When using the memcheck tool, disabling the cache ensures the memory used by glibc to handle __thread variables is directly released when a thread terminates.

Note: Valgrind disables the cache using some internal knowledge of the glibc stack cache implementation and by examining the debug information of the pthread library. This technique is thus somewhat fragile and might not work for all glibc versions. This has been successfully tested with various glibc versions (e.g. 2.11, 2.16, 2.18) on various platforms.

lax-doors: (Solaris only) Be very lax

about door syscall handling over unrecognised door file

descriptors. Does not require that full buffer is initialised

when writing. Without this, programs using libdoor(3LIB)

functionality with completely proprietary semantics may report

large number of false positives.

fallback-llsc: (MIPS and ARM64 only): Enables

an alternative implementation of Load-Linked (LL) and

Store-Conditional (SC) instructions. The standard implementation

gives more correct behaviour, but can cause indefinite looping on

certain processor implementations that are intolerant of extra

memory references between LL and SC. So far this is known only to

happen on Cavium 3 cores.

You should not need to use this flag, since the relevant cores are

detected at startup and the alternative implementation is

automatically enabled if necessary. There is no equivalent

anti-flag: you cannot force-disable the alternative

implementation, if it is automatically enabled.

The underlying problem exists because the "standard"

implementation of LL and SC is done by copying through LL and SC

instructions into the instrumented code. However, tools may

insert extra instrumentation memory references in between the LL

and SC instructions. These memory references are not present in

the original uninstrumented code, and their presence in the

instrumented code can cause the SC instructions to persistently

fail, leading to indefinite looping in LL-SC blocks.

The alternative implementation gives correct behaviour of LL and

SC instructions between threads in a process, up to and including

the ABA scenario. It also gives correct behaviour between a

Valgrinded thread and a non-Valgrinded thread running in a

different process, that communicate via shared memory, but only up

to and including correct CAS behaviour -- in this case the ABA

scenario may not be correctly handled.

--fair-sched=<no|yes|try> [default: no]

The --fair-sched option controls

the locking mechanism used by Valgrind to serialise thread

execution. The locking mechanism controls the way the threads

are scheduled, and different settings give different trade-offs

between fairness and performance. For more details about the

Valgrind thread serialisation scheme and its impact on

performance and thread scheduling, see

Scheduling and Multi-Thread Performance.

The value --fair-sched=yes

activates a fair scheduler. In short, if multiple threads are

ready to run, the threads will be scheduled in a round robin

fashion. This mechanism is not available on all platforms or

Linux versions. If not available,

using --fair-sched=yes will cause Valgrind to

terminate with an error.

You may find this setting improves overall responsiveness if you are running an interactive multithreaded program, for example a web browser, on Valgrind.

The value --fair-sched=try

activates fair scheduling if available on the

platform. Otherwise, it will automatically fall back

to --fair-sched=no.

The value --fair-sched=no activates

a scheduler which does not guarantee fairness

between threads ready to run, but which in general gives the

highest performance.

--kernel-variant=variant1,variant2,...

Handle system calls and ioctls arising from minor variants of the default kernel for this platform. This is useful for running on hacked kernels or with kernel modules which support nonstandard ioctls, for example. Use with caution. If you don't understand what this option does then you almost certainly don't need it. Currently known variants are:

bproc: support the

sys_broc system call on x86. This is for

running on BProc, which is a minor variant of standard Linux which

is sometimes used for building clusters.

android-no-hw-tls: some

versions of the Android emulator for ARM do not provide a

hardware TLS (thread-local state) register, and Valgrind

crashes at startup. Use this variant to select software

support for TLS.

android-gpu-sgx5xx: use this to

support handling of proprietary ioctls for the PowerVR SGX

5XX series of GPUs on Android devices. Failure to select

this does not cause stability problems, but may cause